I think I'm about as susceptible as any other human to having my opinions formed by someone else. All I have to do for this to happen is read someone else's opinion about something, before seeing that something for myself.

So when I found out about Economic Enlightenment in Relation to College-going, Ideology, and Other Variables: A Zogby Survey of Americans, by Daniel B. Klein of George Mason University and Zeljka Buturovic of Zogby International, I decided to read it for myself, properly.

The paper, you see, concludes that politically left-wing people know a lot less about economics than do politically right-wing people.

This has caused something of a stir.

I deliberately avoided reading any other analysis of the paper before I read it myself, and then wrote most of this interminable post. (Had I more time, I would have made this shorter.)

Then I put a bit on the end that links to other discussions of the paper, and summarises the stuff that I missed.

I did all this instead of writing about Lego printers or something because I've been thinking, recently, about scientific papers and their interpretation and reporting. Mass-media science reporting has, I think, never been lousier. If you really pay attention to mass-media science reports, you'll hardly have time to worry about GMOs giving you CJD and UFOs landing at HAARP, because you'll be too busy clearing out your pantry, because whatever stuff cured cancer last week has now been conclusively shown to cause it.

You can usually still get reasonable interpretations of new findings from something like Scientific American, but normal everyday news sources are worse than useless, spraying anti-facts all over the place daily.

If you want to know what some particular piece of research really means, you therefore have to go to the source yourself. This is an important skill for modern humans.

It's tempting to not read the actual paper at all - or just scan the abstract - and then read what some blogger you like said about it. But you really should dig into papers properly, at least occasionally. Now that it's so often possible to have the whole thing in front of you, for free, in a matter of seconds, there's no excuse for just reading what some newspaper journalist mistakenly thinks he read.

I suspected that Economic Enlightenment in Relation to Blah Blah Blah was just a barrow-pushing junk survey, because that's what a lot of political polling is. Complete-garbage surveys are all that various interest groups need to move their barrows along, after all. It only took Sir Humphrey a minute to persuade Bernard that he simultaneously supported and opposed the reintroduction of conscription, so why try harder? Why cover your ideological nakedness with a real fig leaf, when a scrap of paper's cheaper?

Whether or not this particular study was junk, I knew I could easily find some journalist telling me that it was. Or that it wasn't. So I downloaded it myself (PDF), and read it. Feel free to do so yourself, before reading on to have my own opinions stamped on your brain.

The Buturovic/Klein Economic Enlightenment survey has a pretty clear conclusion. To the great delight of the Objectivist playpen in the Wall Street Journal's op-ed pages, this survey found that "the left" in the USA "flunks Econ 101".

The United States has, of course, no actual left wing that any of us foreigners can identify. When US "liberals" agree with policies that were too authoritarian for Richard Nixon, then they're only "liberal" in a relative sense. (That's right - US political blocs are defined by relativism! Or relativity, or something! My god - it'll be social justice next!)

The elephant-in-the-room problem with the Buturovic/Klein paper is that although it was conducted by Zogby International, a respected and above-board organisation, the actual respondents were from an "Online Panel", not a proper random sample. Zogby invited 64,000 people to take part in the survey, and those 64,000 were of course already biased in favour of people who can access the Internet and care to be involved in surveys.

(The Zogby Online Panel appears to be something you can sign up for. Surely you don't have to actively sign up to be surveyable in this way... but if you don't, how can they contact you without spamming? If the Online Panel is actually the same near-meaningless fluff as TV ratings, then the whole project is in dire danger at the outset.)

Anyway, only 4,835 of the people invited to participate responded. That's a 7.6% response rate, which is about par for the course for entertainment-value-only Internet polls. It's a serious, serious problem for any survey that's meant to have some scientific rigour.

You can try to balance things out by weighting responses so that the responders' demographics match those of the whole population; Zogby usually seem to do that with their own Internet surveys. But the authors of this paper didn't do it, and may not actually have been able to do it, if subsets-of-a-subset problems would have left them giving large weight multiples to very, very small slices of the respondent base, giving rise to error bars taller than the whole chart.

Here, though, is one of several points where this paper doesn't follow the standard Crap-Survey script. The authors didn't weight the data, but they make it all available online, so you can see what they were actually working from, and massage it yourself if you like.

(Here's the "survey instrument" in Word DOC format; here's the results in Excel XLS format.)

You may be wondering how the "Economic Enlightenment" survey defines "Economic Enlightenment". And, indeed, how it defines "the left" and "the right".

Well, Economic Enlightenment - "EE" from now on - is meant to be your ability to understand economic reality. Like, I suppose if you can understand that if you don't have much money then it's probably better to rent accommodation than to take out a large zero-deposit mortgage, then that's an economically-enlightened decision.

I think it's uncontroversial that most people in the modern Western world don't have a lot of economic sense. The credit-card companies wouldn't be sitting on such a wonderful green gusher of cash if people-in-general realised that holding your damn horses until you can actually afford something, rather than borrowing at 20%-plus to buy it, will let you own a lot more stuff. People keep borrowing big to buy a brand new car, too; I'd put that decision on my definitely-not-EE list.

(Actually, I think there's a bit of a no-real-left-wing sort of situation in the EE world, too. Look at all of the people in the affluent West who consider it completely normal to be deep, deep in entirely optional debt for your whole adult life. In comparison, anybody with the vaguest semblance of actual money-sense looks like some sort of Oracle of Infallible Wisdom. I dunno what Warren Buffett would count as on this scale; perhaps he'd be a strongly-superhuman Banksian economic Mind.)

The EE survey admits on the first page that their "designation of enlightened answers" may be a "controversial interpretive issue", and that they specifically went out huntin' for "leftist mentalities", without asking questions slanted the other way.

This is another big and significant problem.

Their page-3 example of a survey question, for instance, is "Restrictions on housing development make housing less affordable", with the usual multiple-choice answers from "Strongly Agree" to "Strongly Disagree" and "Not Sure".

The authors use this question as an example of how they "Gauge Economic Enlightenment", because a question apparently has to have at least this definite an "enlightened" answer to be worthy of contributing to an EE score.

But they admit that there are still confounding factors, because different people will have different opinions about what the question's really asking.

What sort of "restrictions", for instance, might we be talking about? Does "affordable" relate to initial purchase price alone, or purchase price plus maintenance and making-good of a shoddily-built house, treatment for the lung disease you got from un-"restricted" asbestos insulation batts shedding fibres into the HVAC ducts, et cetera? What does it "cost" if an electrical fault burns the house down, and you die? What if an un-"restricted" housing industry forms a cartel that builds houses out of damp cardboard and forces poor people to live in them - for a price that's exactly as un-"affordable" as makes the cartel the most money - or live in the park?

The paper doesn't really go into that much detail in its brief discussion of confounding effects, which given its respondents, all living in the troubled-but-not-total-chaos current mainstream US economy, is probably fair enough. There are infinite possible wiggy reasons why someone might mean something strange by their answer to what you thought was a clear question, and if your sample's big enough and random enough (which, once again, is a problem for this paper...) you can iron most of that out.

But I think there's one confounder that should have been mentioned specifically:

Deliberate lies.

Someone who's sympathetic to the current US "radical conservative" movement may personally believe that Sarah Palin is an idiot, but tell a pollster that she's a genius, just to Fight the Good Fight. Similarly, someone who wasn't paying attention during the most recent interminable US Presidential campaigns and so was under the impression that Obama had promised to immediately end both wars and nationalise Halliburton, may tell a pollster that he's 100% happy with the President even though he's actually very disappointed.

(For the same reason, I find it difficult to believe any survey about the sexual activities of teenagers. Religious loonies often seem to get very excited when a survey comes back saying that 90% of 14-year-old boys have had sex a thousand or more times. I don't know whether I'd rather those loonies are so upset because they actually believe the survey, or if they know that it's BS and are feigning belief to advance their own agenda.)

There's definitely some slanted question-selection going on in the EE survey; they admit as much. They had 16 multiple-choice questions in the actual survey, but they chose only eight of those questions to make up the final EE score for respondents. They say they eliminated the questions that were "too vague or too narrowly factual, or because the enlightened answer is too uncertain or arguable" - but I'd say the "narrowly factual" part shouldn't be a problem at all. Ask someone what an "interest rate" or "inflation" is; if they don't know, their EE score drops. An awful lot of people don't seem to understand income-tax brackets; there's another great question for a more factual EE test.

(Perhaps such questions would measure mere economic "literacy", not "enlightenment", though.)

Several of the dropped questions also seem to me to be more likely to get "leftist" answers, since they include, for instance, "Business contracts benefit all parties" and "In the USA, more often than not, rich people were born rich".

But here, again, is evidence that this isn't a pure obviously-fake barrow-pushing trash-poll. The paper tells you they dropped the questions, and why (rightly or not), and the authors also tell you what the dropped questions were. Leaving that last detail out is exactly the sort of thing that trash-pollsters do, because that way you can avoid disclosing that they, for instance, asked 50 questions and published only the ones whose aggregate answers happened to support their thesis.

(The downloadable full results also include responses to all of the dropped questions. I'd do some analysis of that data, if this post wasn't already the size of a holiday novel.)

On page 4 of the paper, there's a list of the eight questions they used, and the answers they deemed "Unenlightened":

1. Restrictions on housing development make housing less affordable.

Unenlightened: Disagree

2. Mandatory licensing of professional services increases the prices of those

services.

Unenlightened: Disagree

3. Overall, the standard of living is higher today than it was 30 years ago.

Unenlightened: Disagree

4. Rent control leads to housing shortages.

Unenlightened: Disagree

5. A company with the largest market share is a monopoly.

Unenlightened: Agree

6. Third-world workers working for American companies overseas are being

exploited.

Unenlightened: Agree

7. Free trade leads to unemployment.

Unenlightened: Agree

8.Minimum wage laws raise unemployment.

Unenlightened: Disagree

To keep this post under the 50,000-word mark, I leave determination of all possible well-reasoned but "unenlightened" answers to these questions as an exercise for the reader. Questions 1, 2 and 8 seem pretty straightforward, if simplistic, to me.

I also at first thought it was pretty hard to reason your way to the "wrong" answer for question 3, as well - but then I realised how many ways there are to measure "standard of living", besides "number of features in your car" and "number of televisions in your house". Is "standard of living" the same as "mean household income"? If not, how not? Answers on a postcard, please.

And the rest of the questions, not to put too fine a point on it, seem to me to be wide friggin' open. Not least because of the lack of any clear definition of terms.

I know, for instance, that there are people in the USA who seriously advance the idea that no Third-World workers for US companies are being exploited in any way, because apparently being paid a quarter of a living wage toward the large debt you incurred when you started work in the crap-for-fat-Westerners factory isn't exploitation. But if you take the completely crazy position that maybe some people in the Third World are being exploited by US companies, and therefore disagree with assertion 6, you're officially Un-Enlightened.

The very next paragraph of the paper, entertainingly, says that any objection such as this is "tendentious and churlish".

I may now find myself required to challenge the pinguid, sesquipedaliaphiliac diplozoon responsible for this paper to a duel.

Let us now move beyond the lack of imagination of the authors as regards valid objections to their definition of enlightenment, and their questionable... question... selection, and move on to the demographic differences between the people who scored high, and low, in EE (whatever, if anything, the EE score actually measures).

The paper's headline "discovery" is that going to college didn't give respondents a statistically-significant higher EE score.

I imagine that tertiary education specifically involving economics - or just a weekend personal-finance-management seminar, for that matter - would have an effect on the EE score - at least, for the four questions that really do seem to have pretty clear objectively-correct answers. But since most university students wisely avoid the dismal science with the same zeal with which sane people avoid the Continental postmodernists, it's hardly surprising that just passing through a university does not cause one to pick up knowledge of economics by osmosis, without studying it.

(Colleges won't make you study it, either. Later in the paper, it points out that of "50 leading universities" surveyed by these people, exactly none had compulsory economics courses.)

It's practically a truism that knowing a great deal about one subject has little to no effect on your knowledge of other subjects. Actually, people who're very knowledgeable about one thing often incorrectly assume that they've got the right end of the stick about some completely different subject. Scam artists love Ph.Ds. (See also, "Engineers' Disease".)

Research that confirms the "obvious" is still valuable, even if it's routinely reported in News Of The Weird stories and derided by politicians who're trying to reduce "wasteful" government spending. (By, of course, taking funding away from anything that they reckon sounds a bit silly, and giving it to the people who've given them a rent-free flat.)

Still, though, EE and college education being uncorrelated doesn't look like a big discovery to me. (If the EE testing method itself is fatally flawed, of course, then no correlation, or lack thereof, means anything.)

What else you got, guys?

Well, there's the left/right thing.

One very simple way of pigeonholing people as "left" or "right" in political ideology is to just to ask them, which this survey did. Once again, the lack of a real left wing in the US political dialogue means that a reborn Dwight D. Eisenhower would now be categorised as a State-trampling tax-and-spend socialist enemy-emboldener - but never mind that for now. The survey asked respondents to categorise themselves as "Progressive/very liberal", "Liberal", "Moderate", "Conservative", "Very conservative", "Libertarian", "Not sure" or "Refuse to answer".

And lo, those who admitted that they were infected with the terrifying disease of "progressivism" scored the very worst on the EE scale, with a neat diminishing-wrongness progression as you proceed toward "Very Conservative". And then a score a little better again - though not statistically-significantly so - for the brave and hardy "Libertarians"!

Once again, this was presented according to proper scientific standards, with a full breakdown and confidence interval listed. The error bars are wide enough to, as I said, mean the Very Conservatives might actually have beaten the Libertarians, but the overall order is clear. And the authors mention, again, that questions specifically aimed at "typical conservative or libertarian policy positions" might have changed the results. (Like, I dunno, maybe "Illegal immigrants are a major drain on the American taxpayer.")

But they, again, conclude, "Naaah." (I paraphrase.)

Next we get a bunch of little tables demonstrating that people who voted for Obama have miserable EE (but people who voted for Nader or the Green Party's Cynthia McKinney score even worse - though the error bars are of course really large for these unpopular "wasted vote" candidates).

Who else scored badly? Oh, just black people, Hispanics, citydwellers, Jews and Muslims, union members, and people with no direct or familial connection with the armed forces.

Who else scored well? "Atheist/realist/humanists", people who did not consider themselves to be "a born-again, evangelical, or fundamentalist Christian", and people who go to church "rarely" or "never". I'm not sure what this is supposed to mean, but it's amusing.

Oh, and "Married" people beat every kind of single person, and beat by a wider margin people in a "civil union/domestic partnership". "Asian/Pacific" respondents scored even better than white folk. NASCAR fans scored better than others, too. (Somewhere in America there must be a married Japanese-American NASCAR-loving atheist Republican who has a perfect model of the entire world economy turning and twinkling in his mind's eye.)

And Nader and McKinney voters may have scored miserably, but people who voted for the Libertarian candidate Bob Barr got an average score even better than those wily McCain voters, though again with a big enough error bar that they might not really have scored higher in a bigger survey.

Registered Libertarian voters scored better than people affiliated with different parties, and in response to "Do you consider yourself to be mostly a resident of: your city or town, America, or planet earth", Planet Earthers scored worst, followed by "not sure/refused", then "my town", then "America". (Presence or absence of a subsequent "Fuck Yeah!" was not recorded.)

All this isn't quite the results that a modern US "radical conservative" would really want to see, but that's just because religious beliefs and a good EE score appear to be incompatible (though "Other/no affiliation" for the religion question scored even worse than those silly Muslims!). Apart from that, the results are driving straight down the radical-conservative road. In brief, the authors' thesis that conservatives and Libertarian-ish people have higher Economic Enlightenment than members of the Pinko-Green Communist Alliance was solidly supported across the board of their questions.

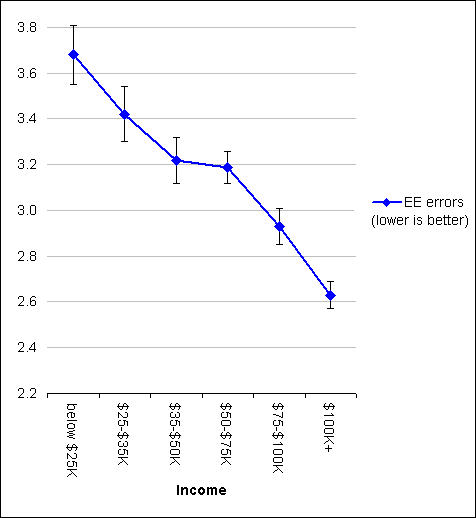

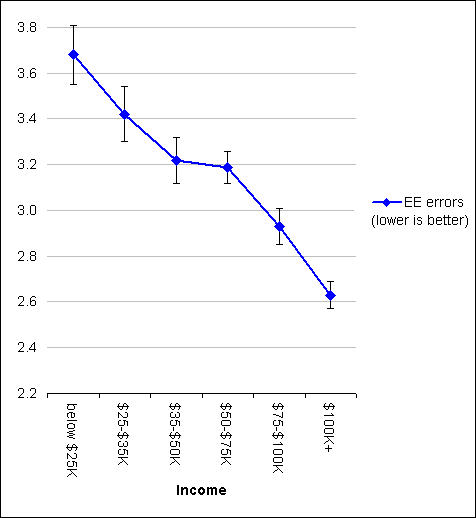

There were several really nice line-fits, too. I mean, check this out:

The more you make, the more economically enlightened you are! Makes sense, doesn't it, kids?

But wait a minute. Look at that tiny little error bar for those brilliant high-EE "$100K+" respondents. A smaller error bar means a larger sample. Did they really have more respondents making $100,000 or more than any of the other income brackets?

According to the raw data - yes, they did! The breakdown for the 4,835 respondents was:

No answer: 593 (12% of respondents)

Below $25K: 277 (6%)

$25-$35K: 337 (7%)

$35-$50K: 541 (11%)

$50-$75K: 941 (19%)

$75-$100K: 757 (16%)

$100K+: 1389 (29%)

Now, this was total household income, not the personal income of the person answering the survey. But the median and mean household incomes for the USA in 2004 were $44,389 and $60,528, respectively. I doubt that either figure has shot up past $100,000 in the last six years.

When 29% of respondents are making around twice as much as the average income - the number of $100K-plus responses is only marginally smaller than the $35-$50K and $50-$75K respondents put together - serious "skewed sample" alarm bells should start ringing.

The paper does have quite a bit of discussion of the problems with their testing technique, but of course concludes that none of them invalidate the study. Or mean that they should have applied weighting to try to un-skew their strange self-selected sample.

(There's also, once again, the possibility of deliberate deception. Respondents might seek to give their ideology-driven answers to other questions more weight by claiming household income much higher than what they actually make.)

On finishing reading the paper - which, gentle reader, means that the end of this epic saga of a blog post is also in sight - I figured that the main problems with it were this obviously unbalanced self-selected sample, the lack of any weighting to attempt to compensate for the sample bias, and the selection of the questions used to construct the EE score.

Apart from that, I reckoned this was a decent paper. It's rather sad that the best you can say about so many media-touted studies is that they conform to the minimum standards for an academic paper - presenting methodology and results, and not blatantly lying. But still, it's better than nothing.

("Yeah, that car he sold me WAS full of rust, but at least it really was a car, not just a couple of bikes covered with tape.")

Perhaps I'm so easily impressed by any paper that achieves the basic benchmarks for publication in a peer-reviewed journal because I'm so used to examining the rather different evidentiary paperwork of true out-there crackpots. Those guys often insist that their magic potion or antigravity machine has been tested by some prestigious institution or corporation - UCLA, Bristol-Myers Squibb, the US military, and of course poor old NASA. But when you ask who actually did the test, and when, and whether it was published anywhere... well, you may end up with a photocopy of a photocopy of a photocopy of something that might originally have been on university letterhead. Or test results from special secret scientists or car-gizmo testers who always seem to find things that nobody else can. But you'll probably only receive abuse.

Compared with that, this paper is a magnificent solid-gold triumph of the scientific method.

What, I now wondered, do people who do not bear the mental scars of numerous encounters with extremely independent thinkers make of the Buturovic/Klein study?

I returned to the page that, seemingly years ago, alerted me to the study's existence in the first place: This question on Ask MetaFilter.

Commenters there linked to this FiveThirtyEight piece by Nate Silver - who has an economics degree.

Silver has previously written that Zogby's "regular polls" were acceptably accurate in the last US Presidential election. But "Zogby Interactive", the "Internet Panel", has consistently been appallingly inaccurate. Because, yes, you really do get on the Internet Panel by just signing up at the Web site!

Knowing this, I feel I now have no option but to class any actual academic researcher who uses the Zogby Internet Panel, but doesn't weight the results and stretch the error bars accordingly, as being deliberately deceptive. There is no excuse for pretending that the Internet Panel is directly representative of anything but itself, even if you take care to ask an unbiased series of questions, which Buturovic and Klein clearly did not.

In this particular case, Silver once again points out the lousiness of the Zogby Internet Panel, and the questionability of the "Economic Enlightenment" questions. He also mentions that some of the questions do not have a clear answer even according to professional economists, "...as Klein should know, since he's commissioned several surveys of them."

This does further damage to the headline "college education doesn't teach economics" finding; actually, the more you learned at university about economics, the more likely you appear to be to give the "wrong" answer for one of the EE questions. This turns that finding into a tautology.

Nate Silver's conclusion from this is that the study is "junk science". If Silver's post had been the only thing I read about this study then I'd agree with him; having actually read the study, I still agree with him, because what he noticed lines up with what I noticed.

Another MetaFilter commenter pointed out that the questions asked will allow anybody who sticks to mainstream US "conservative" viewpoints to, regardless of their actual level of comprehension of what they're saying, get an excellent EE score.

Commenters also came up with a number of theories about why the paper is the way that it is, for instance perhaps because of a conscious or, just barely possibly, unconscious desire to contribute to the US radical-conservative echo chamber about universities being hotbeds of crazy left-wing brainwashing.

(It's true, you know. By and large, the more education someone's received, the more likely they are to hold "leftist" political views. Clearly, brainwashing is the only possible explanation for this.)

And then there's the issue of mining for correlations. If you measure a lot of things and then shuffle the data around until you find something that correlates with something else, you may have discovered a real relationship. But as a dataset increases in size, the chance of finding a statistically-significant but entirely spurious correlation in there somewhere approaches one. Hunt through the data until you find similar-looking graphs and you may indeed have discovered that G causes R, or that R causes G, or that both R and G have a common cause that you didn't measure. But G and R may also appear connected by a pure fluke.

The Buturovic/Klein poll contains a sort of back-door correlation-mining; the question selection seems to have guaranteed the overall "conservatives smart, liberals dumb" conclusions.

(Another commenter was surprised that there wasn't any Laffer Curve BS in the survey. And yet another commenter cunningly attempted to lengthen this post by mentioning a two-question ideology-versus-science test involving "deadweight loss".)

Someone also said that Zogby "is a bit of a joke among other pollsters". But I find it hard to dislike John Zogby himself:

Honestly, I could have better used the hours I spent poring over this study. You could probably have better used the time you spent reading this page.

But there is, at long last, a point to this beyond just debunking that one fatally-flawed study. It is:

The next time you see a reference to a scientific paper on a subject that interests you, if it's possible to dig up the paper without having to trek to the nearest university library or something, do so, and read it for yourself.

(If you've got a standard worse-than-useless newspaper science article in front of you and you're trying to figure out who the "scientists" are who've allegedly discovered the cure for oh-god-not-again, Google Scholar is a good place to start. Note, however, that the modern mass-media science story is based on press releases from university and corporate PR bodies, who are famous for sending puffed-up announcements about studies that haven't actually quite been published yet. If it ain't been published, you ain't gonna find it in Google Scholar, PubMed or anywhere else.)

You need advanced education to understand some scientific papers. You're probably not going to get a lot from a paper about, for instance, cryptography or particle physics, unless you're already quite knowledgeable in those fields.

But a lot of papers, definitely including many of the psychological, sociological and medical/epidemiological papers that are so popular with the newspapers, can be comprehended with nothing more than a bit of light Wikipedia use and basic knowledge about statistics and probability. That latter knowledge is, of course, useful in all sorts of other situations too.

(You can get a basic tutorial in stats and probability from Wikipedia too, or in a more structured and entertaining form from the classic How to Lie With Statistics, and/or Joel Best's much more recent Damned Lies and Statistics. John Allen Paulos' Innumeracy is also excellent.)

At the very least, it's a salutary mental exercise to understand what a good study's saying, or to figure out what's wrong with a bad one. And it can also tip you off about the reliability of different sources of information about scientific discoveries. Who knows - you may find that your local newspaper has a science reporter who's actually good!

The developed world is entirely built upon a foundation of science, and the basic interchangeable unit of scientific research is not, as one might suppose, the undergraduate lab assistant, but the published paper. To float along on the surface of the world's science and technology without ever looking at the papers from which it is all built is like eating meat daily without taking any interest in what happens in a slaughterhouse.

I've been to a slaughterhouse.

I find reading scientific papers somewhat less unpleasant.